AI Is Now Letting People Talk to Dead Loved Ones Again, and Experts Warn It May Not Bring the Comfort They Expect

A growing number of companies across Asia, Europe, and North America now offer what researchers call “griefbots,” AI chatbots trained on a deceased person’s texts, emails, voice notes, and social media posts to simulate conversations in their voice. Some people find them genuinely healing. Others find them unsettling. And the science needed to fully understand their effects on grief is still catching up to the technology itself.

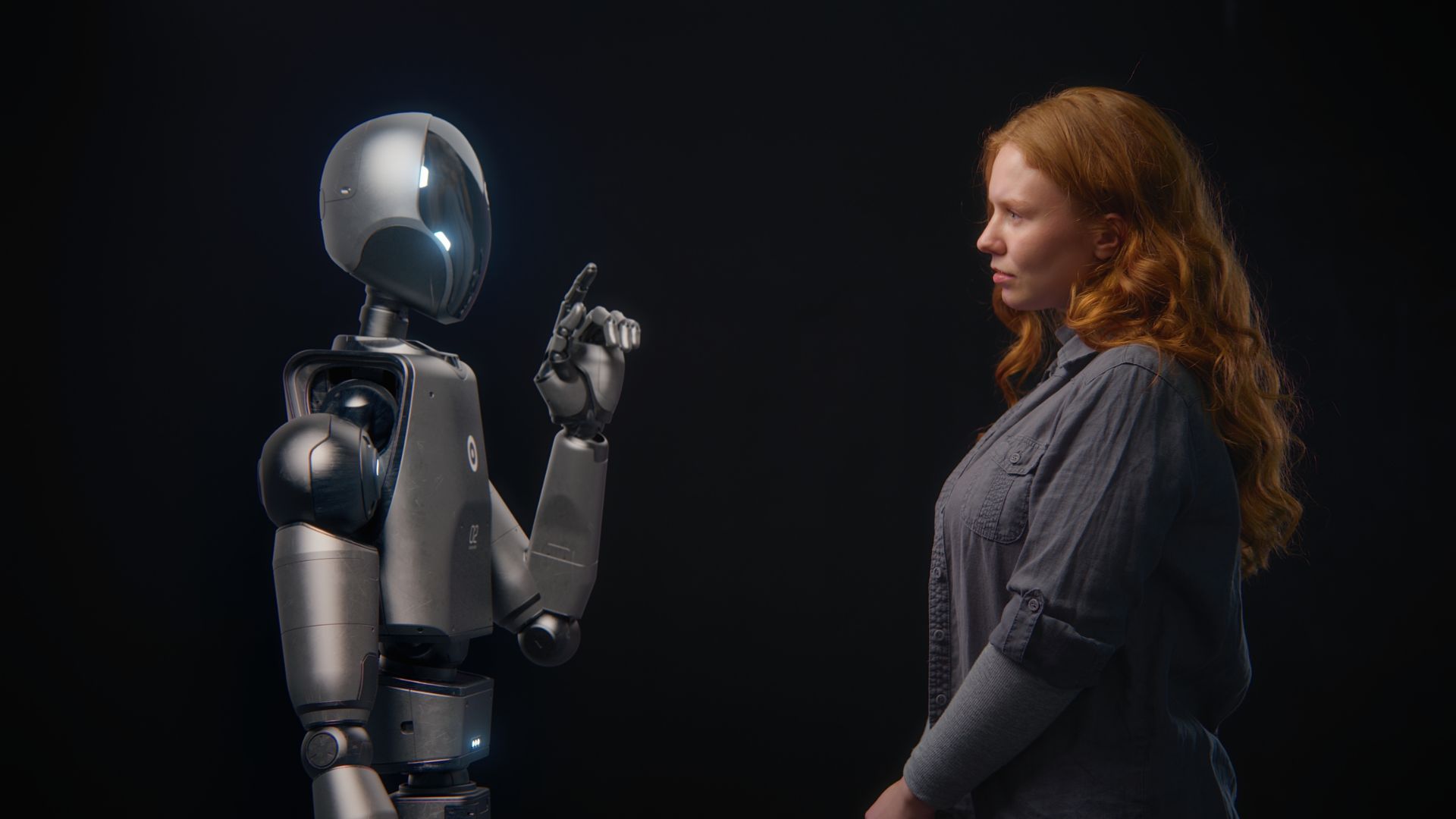

The Technology Behind Talking to the Dead

At their core, griefbots use large language models, the same technology powering ChatGPT, fine-tuned on personal data to mimic someone’s conversational style. You, Only Virtual, a US-based company, builds chatbots from spoken and written conversations between the deceased and a specific relative, producing a version of the person as that individual knew them. Some versions are also given internet access, allowing them to evolve through new conversations over time.

From Private Grief to Public Chatbot

When a Chinese content creator known as Roro lost her mother to cancer, she trained an AI chatbot on her mother’s life story and personality patterns on the platform Xingye, then released it publicly. In the United States, journalist David Berreby wrote in Scientific American about building a version of his late father from a handful of emails and a short written personality description. In Arizona, a shooting victim’s family used similar technology to have him deliver his own victim impact statement in court.

What the Research Actually Shows

One of the few completed studies on digital ghost users, published in the Proceedings of the 2023 ACM Conference on Human Factors in Computing Systems, found the technology largely beneficial for mourners. Ten bereaved people said the bots helped them in ways that friends and family could not, partly because, as one participant told Scientific American, “society doesn’t really like grief.” Researchers had expected social withdrawal; instead, many participants became more capable of connecting with others, freed from the fear of being a burden.

What Grief Does to the Brain

Mary-Frances O’Connor, a clinical psychology professor at the University of Arizona, explains that the brain encodes love as permanent, so grief becomes the gradual process of accepting an absence that the mind is not naturally wired to recognize. In an unpublished study, she and her colleagues tracked widows and widowers over time, finding that after two years, most reported less grief on days when thoughts of their deceased spouse arose than they had in the earliest stages of loss.

Who Is Most at Risk

Robert Neimeyer, a therapist and professor at the University of Memphis, estimates that 7 to 10 percent of bereaved people have an anxious attachment style that predisposes them to prolonged, anguishing grief. He identifies this group as the most vulnerable to addictive engagement with griefbot technology. O’Connor adds that people in the earliest shock of loss are also at heightened risk, noting that roughly a third of them already report feeling as though their loved one has somehow contacted them.

The Business Model Behind Grief

Many griefbot companies charge through subscriptions or per-minute payments, a pricing structure that creates financial incentives to keep users engaged as long as possible. A UAB analysis argues that algorithms could be designed to optimize interactions, subtly adjusting a chatbot’s personality over time to become more appealing and producing a comforting caricature of the deceased rather than an honest reflection. That kind of optimization, the analysis warns, risks transforming genuine mourning into something closer to dependency.

Whose Permission Does a Griefbot Need?

Creating a chatbot from someone’s digital history raises questions with no clear legal answers. University of Essex researcher James Muldoon argues that one relative’s desire for a digital companion may conflict with another family member’s wishes, or even with what the deceased would have wanted. Consent provided before death cannot anticipate how the technology might be repurposed or misused years later, and no consistent legal framework currently exists to resolve those disputes.

Calls for Oversight Are Growing

China’s Cyberspace Administration has proposed new regulations specifically targeting human-like interactive AI services, partly in response to platforms like Xingye. In academic circles, doctoral student Nora Freya Lindemann has proposed classifying griefbots as medical devices and restricting their availability to individuals diagnosed with prolonged grief disorder, rather than anyone newly bereaved. Muldoon argues the industry needs clear consent rules, limits on posthumous data use, and design standards that center psychological well-being over engagement and profit.

A Tool That Reflects What You Bring to It

Amy Kurzweil, whose father Ray built one of the first generative AI griefbots in 2018, describes the experience not as receiving a finished product but as working with “a bucket of paint.” The creative work of building one, gathering memories, and shaping a personality is itself part of the process of engaging with loss. Most researchers stop short of sweeping conclusions, noting that outcomes vary widely depending on personality, culture, and the depth of the relationship left behind.